Building DocBot: The Journey So Far

The development of DocBot has been an intense and rewarding journey, involving infrastructure setup, database modeling, version control, and rigorous testing. Throughout this process, I've been leveraging ChatGPT to upskill myself and push forward in areas like FastAPI, PostgreSQL, Docker, and Alembic versioning. Here’s a detailed breakdown of how we got here, the challenges we faced, and the milestones we've achieved.

Infrastructure and Setup

One of the foundational tasks was setting up the right infrastructure. We decided on a Docker-based setup to ensure environment consistency. This allowed for easy sharing and collaboration while eliminating "it works on my machine" issues.

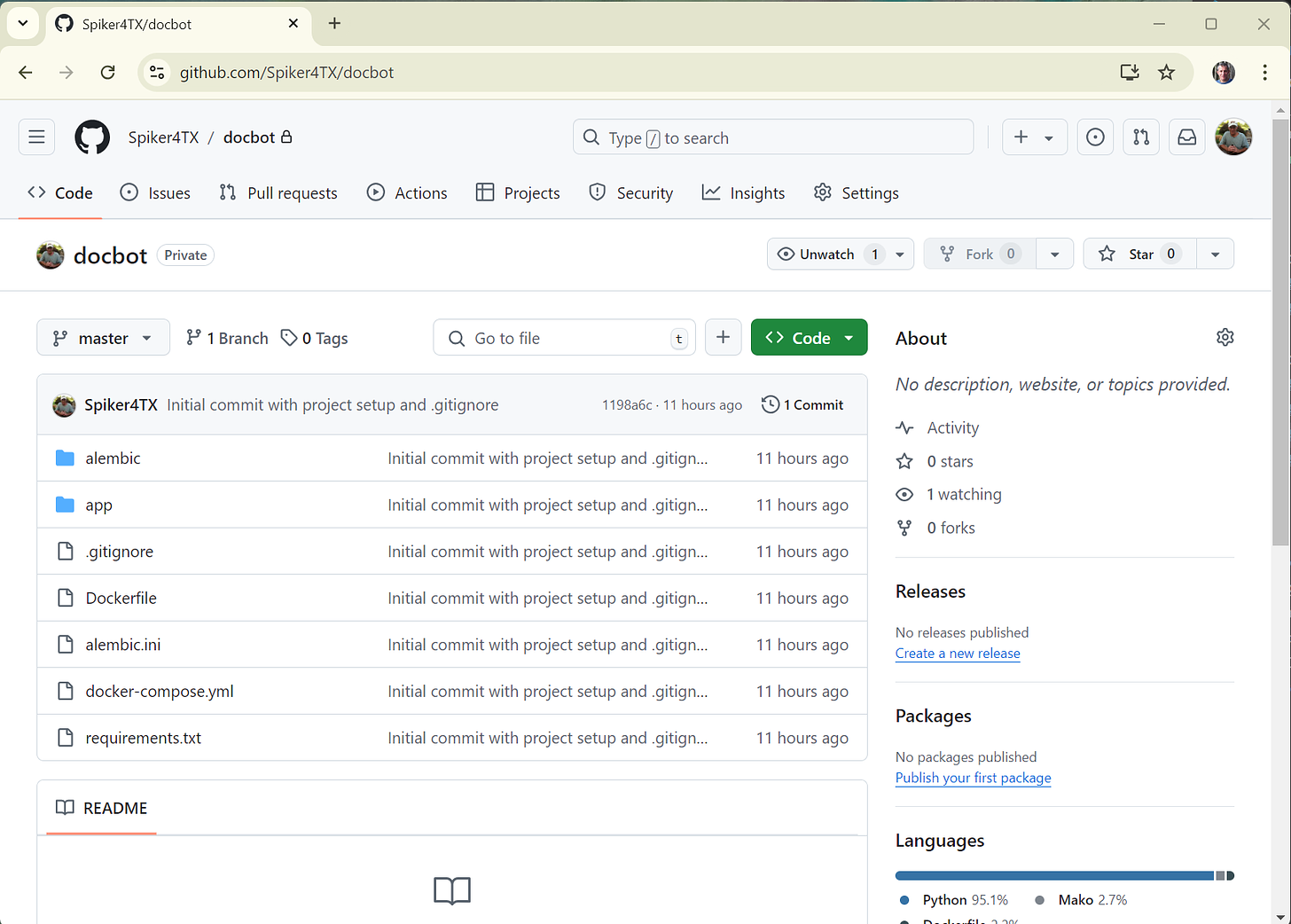

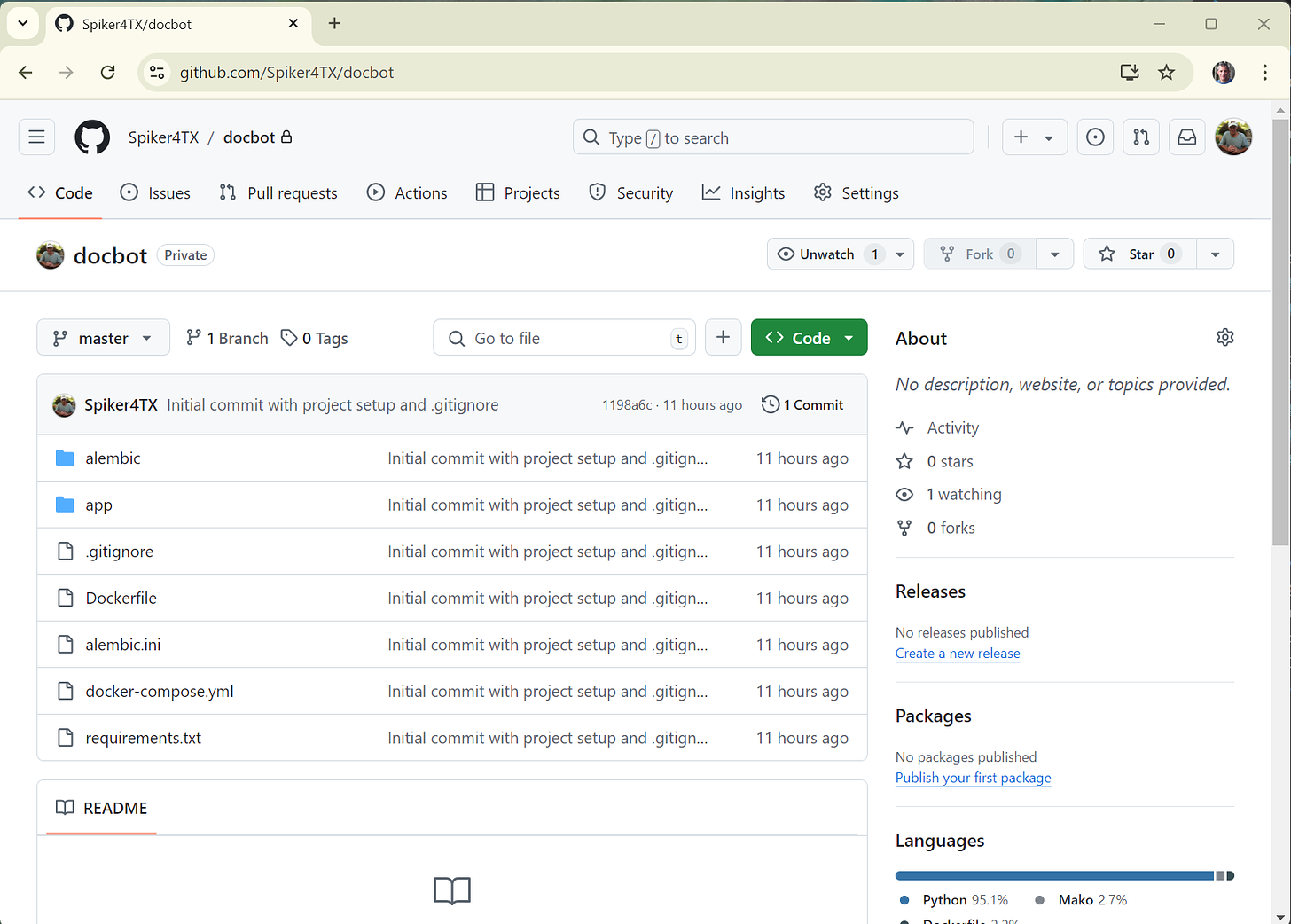

To keep the development process clean and reproducible, we containerized FastAPI for the backend and used PostgreSQL as our database. Version control was managed with GitHub, allowing us to track all code changes seamlessly. Here’s a screenshot of our GitHub repository after the initial commit.

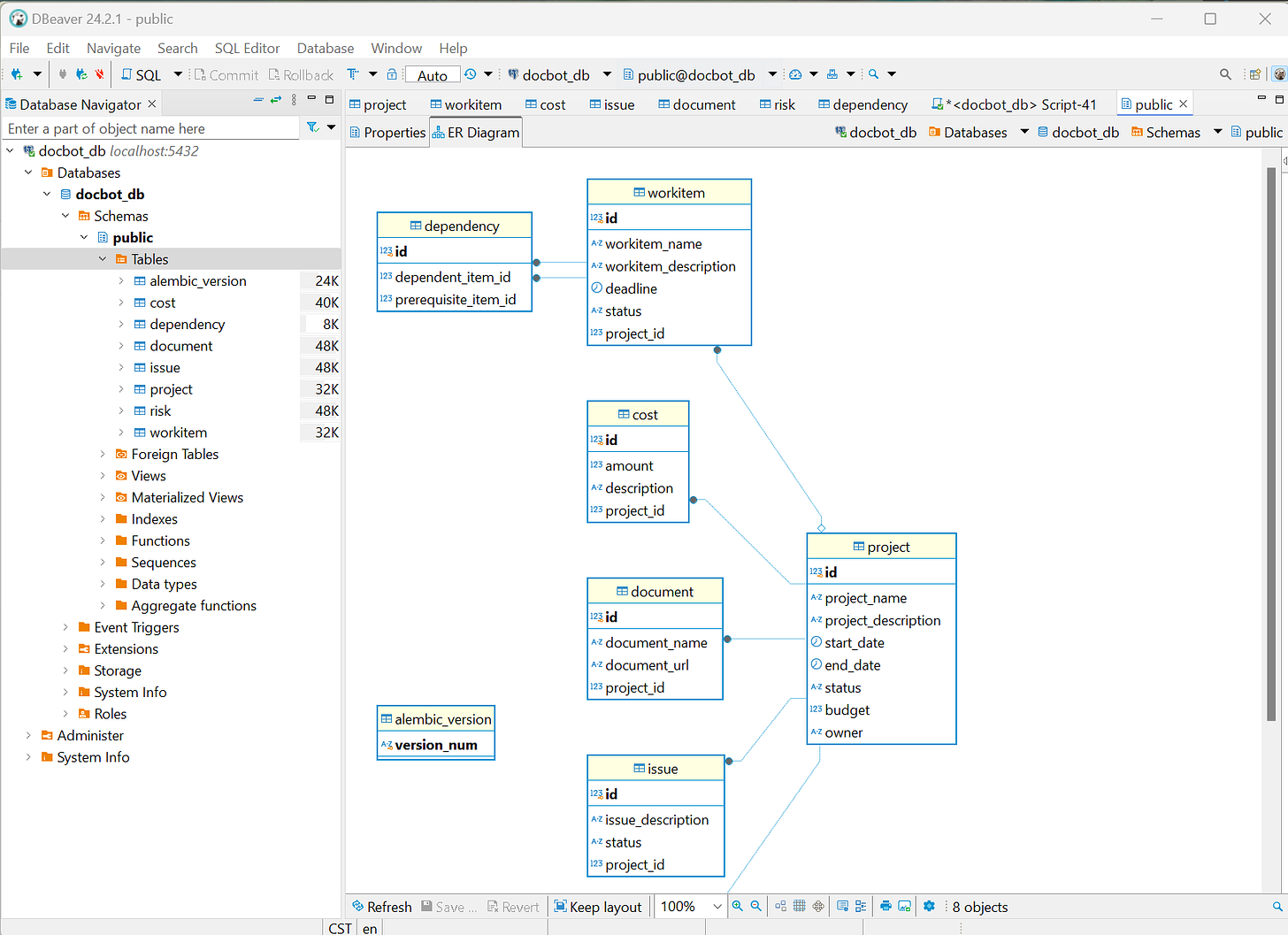

Database Modeling and Alembic Migrations

With the infrastructure in place, our next focus was the database. We used SQLModel, a Python library that integrates SQLAlchemy and Pydantic, to create models for Projects, WorkItems, Documents, Risks, Issues, and Costs.

Alembic was crucial in managing database schema changes and migrations. This tool allowed us to track changes and roll out new features without disrupting the existing database structure. Initially, there were challenges in understanding Alembic's versioning and migrations, but after some trial and error, we nailed down a solid strategy.

Here’s a glimpse of the database structure using DBeaver, our go-to database management tool:

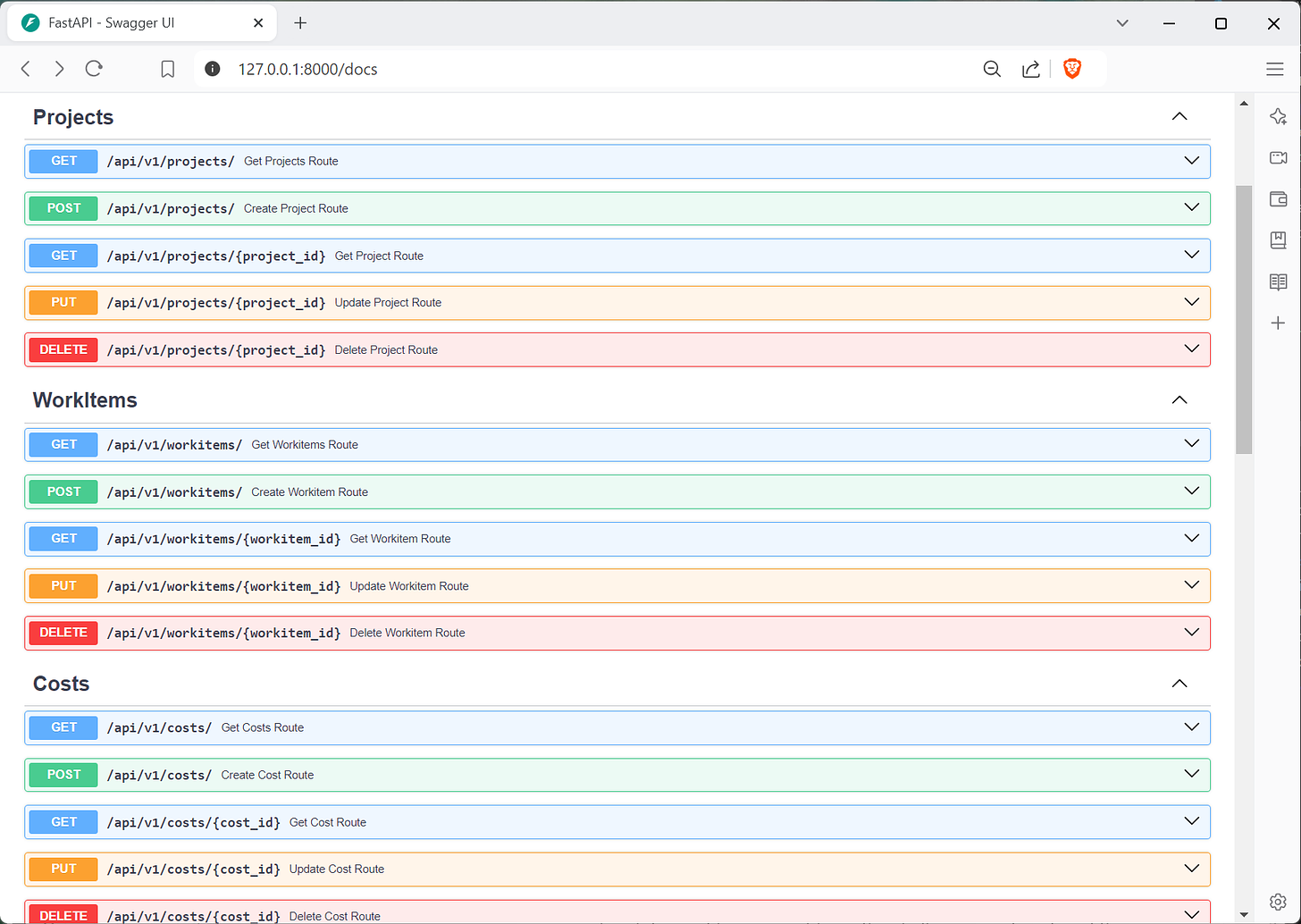

FastAPI Endpoints and Refactoring

Once the models and the database were in place, we shifted focus to creating the API endpoints using FastAPI. FastAPI's speed and simplicity allowed us to quickly build out the necessary CRUD operations for each model. The refactoring process was critical here as we organized the models, routes, and CRUD operations into logical groupings for scalability.

After refactoring, here’s how the current API endpoints look in FastAPI:

This ensures that DocBot is easily extensible, allowing future additions like Resources, Change Requests, Timesheets, and Financials to seamlessly integrate into the project.

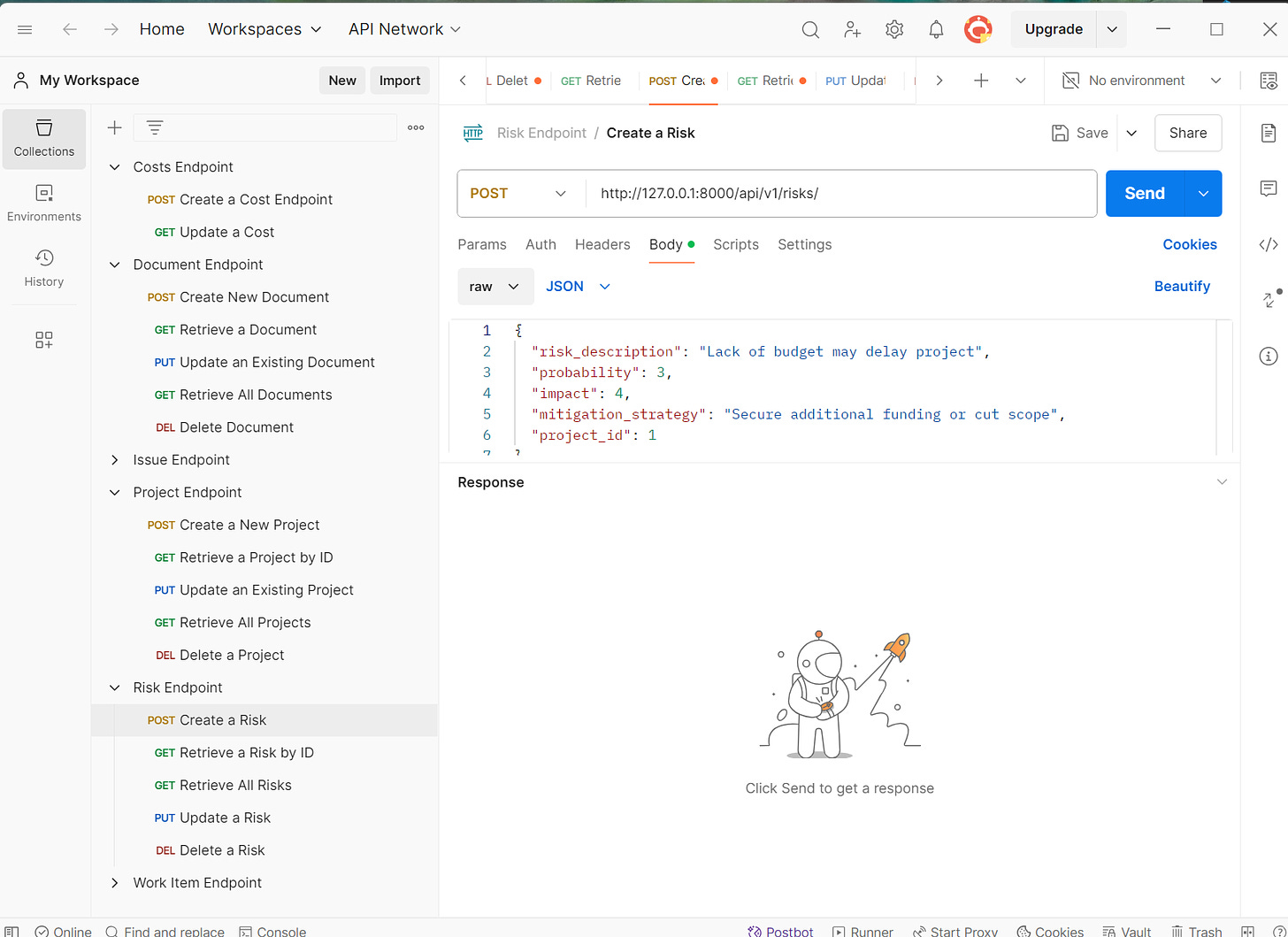

Testing and Cascading Deletes

Testing was another key focus. We used Postman to rigorously test each endpoint, ensuring that the CRUD operations performed as expected. Special attention was given to cascading deletes to verify that removing a parent record like a Project also removed its associated WorkItems, Documents, and Issues.

Here’s an example of Postman testing one of our API endpoints with sample data:

Version Control with GitHub

Keeping track of code changes and managing collaboration was essential to avoid issues with multiple versions. We set up a GitHub repository early on and used branching and pull requests to manage the flow of code. This made the development process smoother and kept our codebase organized and accessible.

Here’s a screenshot of our GitHub repository, showing the initial commit and version control in action:

Docker and Environment Consistency

Throughout the project, we ran our services in Docker containers, which ensured that everything—from FastAPI to PostgreSQL—was running consistently across different environments. Docker has been crucial in eliminating discrepancies between the development, testing, and production environments.

Restarting services was sometimes tricky, but with practice, we’ve mastered the Docker Compose workflow to rebuild and restart services without breaking a sweat.

Upskilling with ChatGPT

Throughout this process, ChatGPT has been instrumental in helping me upskill. By leveraging ChatGPT, I was able to:

Get real-time feedback and solutions on errors and issues that cropped up in Docker, FastAPI, and PostgreSQL.

Learn more about advanced database concepts like cascading deletes and foreign key relationships.

Accelerate the learning curve for Alembic versioning and its integration with SQLModel.

Refactor code and organize the project for future scalability.

The key takeaway has been the ability to learn in real time and get unstuck quickly. This accelerated my learning process and allowed me to make informed decisions, ensuring the project's success.

Next Steps

Now that the foundation is strong, we’ll focus on expanding DocBot with new modules like:

Resource Management: Tracking who’s working on what and assigning tasks more effectively.

Financial Management: Adding support for budgets, expenses, and cost tracking.

Change Requests and Timesheets: Allowing users to manage project scope changes and track the time spent on each task.

But before that, we’re ensuring that all current functionality, including cascading deletes, works as intended.

Conclusion

DocBot’s journey has been a complex but rewarding endeavor. We’ve set up infrastructure, version control, and testing protocols that ensure a strong foundation for future growth. More importantly, leveraging tools like Docker, FastAPI, and ChatGPT has enabled rapid progress, empowering continuous learning and improvement.

Stay tuned for more updates as we continue expanding DocBot's capabilities!